Tech

What Exactly Is Shadow Banning?

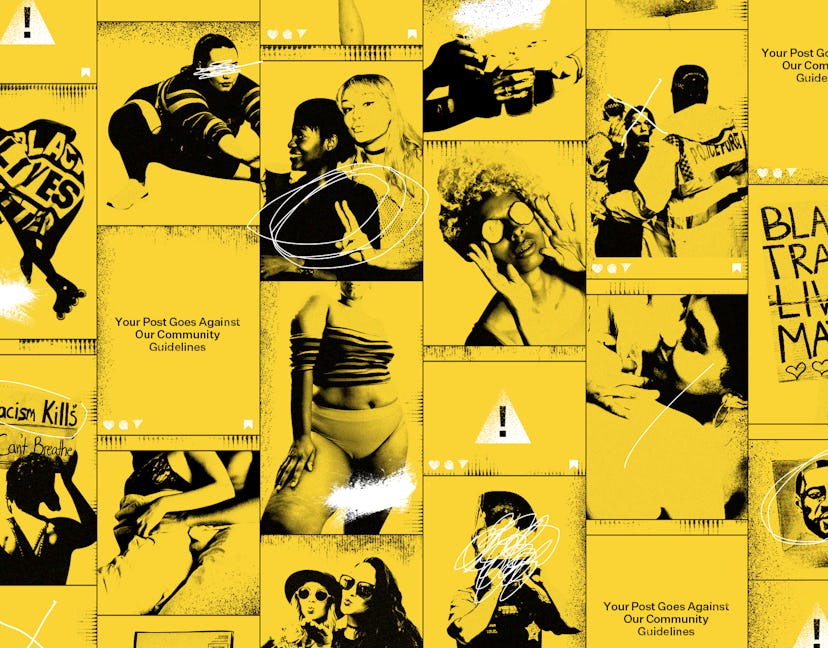

And how does it affect minoritised users?

This piece is the first in a two-part exploration into shadow banning and its effects. For more in-depth information about how shadow banning affects people from different communities, please click here.

The last few months have seen social media become a bigger part of many of our lives. During lockdown, platforms like Instagram have enabled us to stay connected, spread news, and mobilise communities, as we’ve seen play out with both COVID-19 and the Black Lives Matter protests. Social media is a space for alternative narratives, where the voice of minority communities carries weight and can hold brands, institutions, and influential individuals to account. It is also the workplace of a generation of influencers and content creators. However, all these users are beholden to algorithms, which, it’s become apparent, may be manipulated in a way that sees certain individuals and groups lose out – a process known as shadow banning.

Shadow banning: what is it & how does it work?

Shadow banning is the term used to refer to the removal or obscuring of content from Instagram without warning, driving down engagement. On her blog, social media marketing expert Alex Tooby defines it as “Instagram’s attempt at filtering out accounts that aren’t complying with their terms.” She adds: “The shadow ban renders your account practically invisible and inhibits your ability to reach new people. More specifically, your images will no longer appear in the hashtags you’ve used, which can result in a huge hit on your engagement. Your photos are reported to still be seen by your current followers, but to anyone else, they don’t exist.”

Officially, a post, photo or video can only be removed from Instagram if one of the site’s content reviewers believes it goes against the platform’s community guidelines. It can also be downranked if it’s been labelled as misinformation by Instagram’s fact-checking partners. (Users can flag alleged violations of the guidelines to reviewers in a number of different ways, including reporting a post from within the app or filling out the contact form). Once the content is restricted, users are given the option to request a review. This in turn sends the post, photo, or video to a second reviewer and the content can be restored if it is concluded that it was removed in error.

In April 2019, TechCrunch reported that Instagram had taken the step to restrict posts that are “inappropriate but do not go against Instagram’s community guidelines,” such as posts that are sexually suggestive, but don’t necessarily depict sexual acts or contain nudity. According to Instagram (via Techcrunch), “this type of content may not appear for the broader community in Explore or hashtag pages,” crucial spaces that many creators depend on for reaching new followers.

TechCrunch also reported that content moderators were being trained “to label borderline content when they’re hunting down policy violations” and these labels will be used to programme algorithms to identify this content, too. However, what constitutes “borderline content” and what is being done with it remains unclear.

"Users are beholden to algorithms, which, it’s become apparent, may be manipulated in a way that sees certain individuals and groups lose out."

The recent Black Lives Matter uprisings have introduced a new side to shadow banning, where the surge in Instagram users trying to share content using the #blacklivesmatter hashtag or repost BLM content resulted in “action blocked” notifications.

“We have technology that detects rapidly increasing activity on Instagram to help combat spam,” Instagram’s PR team tweeted on June 1. “Given the increase in content shared to #blacklivesmatter, this technology is incorrectly coming into effect. We are resolving this issue as quickly as we can, and investigating a separate issue uploading Stories.” This acknowledgement from Instagram highlights what countless users have alleged previously: it's possible for certain communities to have their content blocked and their reach limited.

Algorithms & bias

Facebook and Instagram have global safety and security teams of over 35,000 people, including those who review and remove content from the platforms. The demographics of these teams have not been disclosed, but if Facebook’s 2020 diversity report is anything to go by, meaningful representation is a long way off. In the U.S., 3.9% of the Facebook workforce is Black, while white workers make up 41% of the team. Looking specifically at technical roles, the number of Black people shrinks to 1.7%.

As Instagram’s algorithms are programmed by humans, this bias can be imprinted in the programs that they build. Algorithmic discrimination within tech products, particularly social media platforms, is rife, as Sara Wachter-Boettcher outlines in her book Technically Wrong: Sexist Apps, Biased Algorithms, and Other Threats of Toxic Tech; and professor of communication studies, Lorna Roth highlights in her essay Making Skin Visible Through Liberatory Designs. Roth emphasises that technology cannot be racist in and of itself, but it is “created by people who have framed and prefixed the infrastructure and underside by economic and cultural design decisions.” Roth suggests that “[technical designers] had a low level of awareness around the representations of race embedded in their practice.”

Despite attempting to make ‘neutral’ systems, programmers and technical designers do not exist in a vacuum. Rather, they live and work in a society that has always understood the bodies of Black people through constructs established under colonialism, including the idea that Black people are morally lax and present themselves as sexually aggressive. “Black bodies are hypersexualised anyway,” says influencer marketing expert Chloe Jones*, explaining that this is why she thinks they’re flagged up as sexually suggestive content more often than white bodies are.

"Despite attempting to make ‘neutral’ systems, programmers and technical designers do not exist in a vacuum."

Shahmir Sanni, one of the people involved in uncovering the Cambridge Analytica scandal, agrees, explaining that an algorithm “eventually stops showing your content because whatever [the platform’s] employees have input into the initial program doesn’t work in favour of [certain] content, particularly Black bodies, sex workers, and criticisms of whiteness.”

In October 2019, digital newsletter Salty – which is committed to amplifying the voices and visibility of women, trans, and non-binary people – decided to further investigate shadow banning. The membership-driven platform said that Instagram algorithms were rejecting its ads, which were portraits of the Salty community. According to Salty, Instagram’s reported reason for removing the content was that it was “promoting escorting services.” According to Salty, Instagram later admitted that these ads were falsely flagged and re-instated them on the platform. At the time, an Instagram spokesperson told the Daily Beast “Every week, we review thousands of ads and at times we make mistakes. We made mistakes here, and we apologise to Salty. We have reinstated the ads, and will continue to investigate this case to prevent it from happening again.”

In response to this incident, the Salty team collected data from their communities, to understand whether other people were having similar experiences, and to illustrate the impact that algorithms can have for users in minority groups.

Of the 118 participants who were surveyed, “many of the respondents identified as LGBTQIA+, people of colour, plus sized, and sex workers or educators,” Salty says. These Instagram users “experienced friction with the platform in some form, such [as] content taken down, disabled profiles or pages, and/or rejected advertisements.” When it came to content removal, according Salty’s posted survey results, 54% had been told that they had violated “community guidelines” without any further detail – or not provided with a reason.

Salty said it had arranged to meet with Facebook to “discuss ways to make the policies more inclusive”, but two months prior to the release of the report, “Facebook ceased communication with Salty, and made no indication that they plan (or ever planned) on actually meeting with us to discuss policy development.”

Instagram did not respond when asked for comment about the Salty investigation and the alleged ceasing of communication.

What can be done about shadow banning?

What makes things even more difficult is that almost everything we know about how Instagram functions is guesswork, even for social media experts. “I think it’s one of those things with a lot of industry professionals: they think they know everything about Instagram but you genuinely don’t unless you work for Instagram,” Chloe Jones emphasises. And for those who do work at mega tech companies, it’s likely they are not able to share anything about their work due to strict NDAs. “Accept a job at any Silicon Valley company, and chances are someone will ask you to sign a nondisclosure agreement,” writes Jeff John Roberts for Fortune. He adds: “These documents, dubbed ‘contracts of silence’ by academics, were once only required of senior managers, but today they are as common in the tech world as fleece vests.”

Instagram did not respond when asked for more information about their company NDAs.

So, with the limited knowledge that we have on how social media algorithms function, where does that leave users, especially those in minority groups? Chloe Jones believes there are a number of ways to try to beat the algorithm on an individual level: “You have to use all aspects of Instagram because you have to remember that it’s a business and, as with any business, you get rewarded the more you use different functions. A lot of people are still using it as a photo-sharing app, whereas it’s not that any more – there are photos, videos, IGTV, stories. You have to maximise all of it.”

On top of this, though, there needs to be more clarification around platform policies, greater transparency as to how the algorithms work, and tighter regulations around their functionality.

On 15 June 2020, Adam Mosseri, the head of Instagram, announced in a blog piece that the company would be taking a harder look at “how our product impacts communities differently” with a focus on harassment, account verification, distribution, and algorithmic bias.

“We need to be clearer about how decisions are made when it comes to how people’s posts get distributed,” Mosseri wrote. “Over the years we’ve heard these concerns sometimes described across social media as ‘shadow banning’ – filtering people without transparency, and limiting their reach as a result. Soon we’ll be releasing more information about the types of content we avoid recommending on Explore and other places.”

"Identifying algorithmic discrimination is one thing, but it’s time we asked ourselves who created those algorithms in the first place and how did their experience of the world shape it?"

Mosseri continued: “Some technologies risk repeating the patterns developed by our biased societies. While we do a lot of work to help prevent subconscious bias in our products, we need to take a harder look at the underlying systems we’ve built, and where we need to do more to keep bias out of these decisions.”

An Instagram spokesperson shared with Bustle that the company is in the process of setting up an Equity team, a task force dedicated to “ensuring fairness and equitable product development are present in everything we do” through working with Facebook’s Responsible AI team.

These moves are all positive, but in a world where bias is baked into the fabric of society, changes in policy and task forces are just a drop in the ocean. Identifying algorithmic discrimination is one thing, but it’s time we asked ourselves who created those algorithms in the first place and how did their experience of the world shape it? Perhaps the only way forward is to scrap those algorithms and start afresh. As Sendi Mullaninathan, a professor of computation and behavioural science, wrote in his piece for The New York Times last year: “Software on computers can be updated. The ‘wetware’ in our brains has so far proven much less pliable.”

*name has been changed to protect the individual’s identity

This article was originally published on